Summary

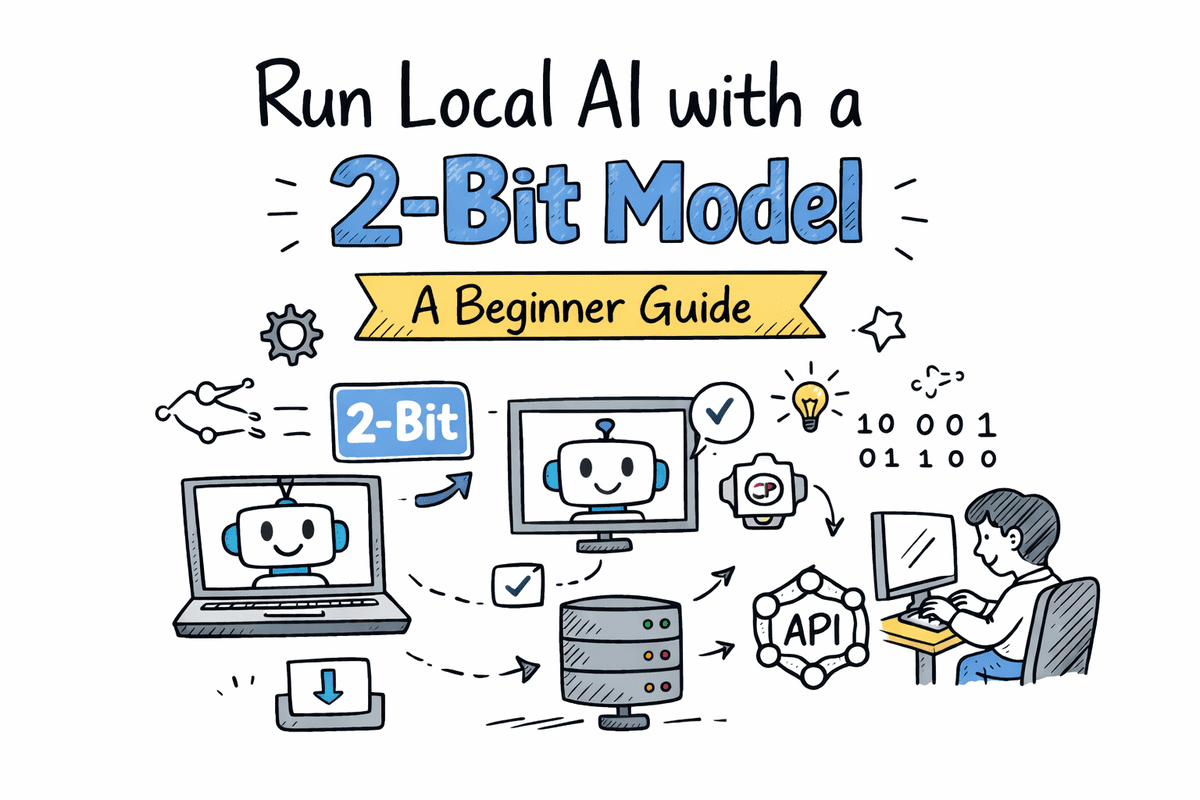

Running small AI models locally with BitNet provides BI professionals a powerful tool for chat and inference without relying on external servers.

New Opportunities with BitNet

The article explains how users can install the BitNet b1.58 model and set up a fully local AI chat and inference server. This is made possible through the bitnet.cpp tool, allowing BI professionals to run their AI applications directly on their machines without dependency on cloud infrastructures.

Implications for the BI Market

This development responds to the growing demand for privacy and data sovereignty within the BI sector. With the ability to execute AI models locally, companies can better protect sensitive data and reduce reliance on external vendors. Competitors like TensorFlow and PyTorch also offer tools for local model management, but BitNet focuses specifically on ease of use and accessibility.

Key Takeaway for BI Professionals

BI professionals should consider revising their AI strategies to incorporate local solutions for enhanced control and security. It is essential to start experimenting with BitNet and similar tools to understand how these solutions can support their data-driven decisions.

Deepen your knowledge

AI in Power BI — Copilot, Smart Narratives and more

Discover all AI features in Power BI: from Copilot and Smart Narratives to anomaly detection and Q&A. Complete overview ...

Knowledge BaseChatGPT and BI — How AI is transforming data analysis

Discover how ChatGPT and generative AI are changing business intelligence. From generating SQL and DAX to automating dat...

Knowledge BasePredictive Analytics — What can it do for your business?

Discover what predictive analytics is, how it works, and how to apply it in your business. From the 4 levels of analytic...